How Network Collapse Hits Agentic AI Systems and How to Avoid It

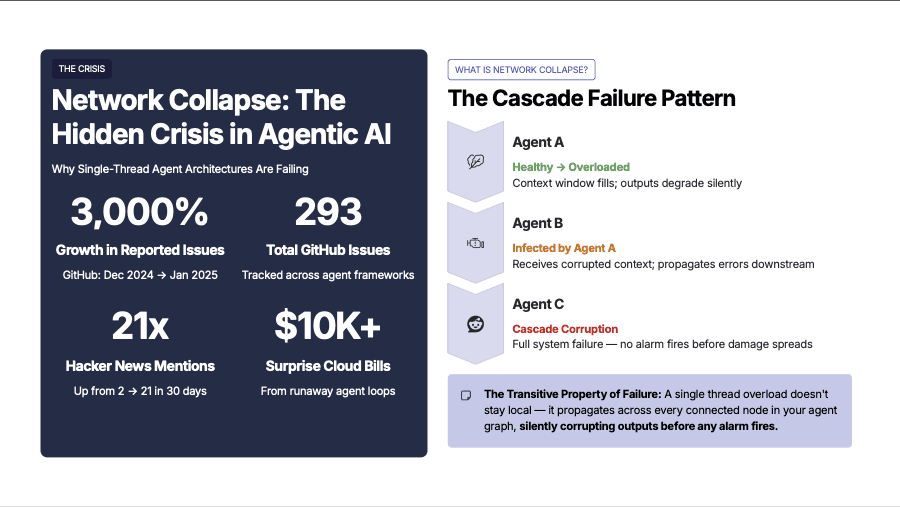

Network collapse occurs when an agent in an interconnected system loses cognitive coherence and triggers a cascading failure that degrades or disables the entire agent network.

Key takeaways

Network collapse occurs when an agent in an interconnected system loses cognitive coherence and triggers a cascading failure that degrades or disables the entire agent network.

Build resilience through architectural constraints like multi-conversation agents, controlled cross-network bridges, and rapid isolation rather than explicit behavioral instructions.

Single-thread architectures create inevitable failure modes; multi-conversation designs isolate degraded threads, preventing system-wide collapse and maintaining overall agent functionality.

By Michael Rollins—The potential for autonomous agents is almost beyond imagination. Business leaders want to use AI systems to revolutionize operations. But there is a fundamental flaw in how the tech industry is creating and implementing these systems.

That flaw has given rise to the term "network collapse." A couple years ago, this phrase had no meaning in the AI world. Now it pops up regularly in discussions about “the Claws”—OpenClaw and its clones.

Let's look at what network collapse is, why it's happening, and what the crisis reveals about building resilient autonomous systems. We'll also talk about one company that is zigging while the rest of the industry zags.

What is network collapse in an AI system?

Network collapse occurs when one AI agent in an interconnected system loses cognitive coherence and triggers a cascading failure that degrades or disables the entire agent network.

It's like having one of the people in your office go crazy—just starts ranting and rambling. It's infectious. All of a sudden, the entire office goes crazy because they keep trying to understand what the crazy person is saying.

Here's what happens:

As an agent operates over time, its context window gradually fills with conversation history, tool outputs, and intermediate reasoning steps. Eventually, the context window reaches capacity and loses the ability to summarize conversations across multiple context window summarizations. The important bits of the summary fall away, and context rot sets in at the beginning of the next context window.

The agent loses coherence. It can no longer maintain consistent reasoning about its objectives or current state. Downtime begins, and it will often begin looping on a single task or tool call, over and over.

In isolation, a single degraded agent is merely ineffective. But in interconnected agent networks, the problem multiplies exponentially. That first agent starts producing corrupted outputs and feeding them to other agents.

The degradation spreads through the network following the communication graph. What starts as a single point of failure becomes a cascade that brings down the entire system.

Why do single-thread architectures fail?

The fundamental architectural flaw is deceptively simple. Popular agentic AI implementations—including OpenClaw and similar systems—run each agent as a single conversational thread. This design decision, while seemingly intuitive, creates an inevitable failure mode.

A former Microsoft engineer who worked on Copilot agents recently told me: "The biggest issue right now in OpenClaw is network collapse. Entire research papers are emerging to address it."

These collapses can be costly. They can cause infinite loops, continuously calling APIs and hammering inference endpoints. Multiple reports exist of developers receiving bills exceeding $10,000 from a single collapsed agent running overnight.

Network collapse is more than a technical curiosity—it's a business crisis.

Why do agentic AI systems need guardrails?

Network collapse in agentic AI mirrors failures across industries deploying autonomous systems. A recent Forbes Technology Council analysis examined why agentic AI initiatives in retail are stalling after early success. The core insight: organizations are operating with the wrong mental model.

They're treating autonomous agents as simple automation tools rather than complex systems that require careful operational guardrails.

Here's an example. An autonomous inventory management system uses multiple agents to manage ordering, demand forecasting, and warehouse allocation. One agent misinterprets a demand signal—maybe a temporary spike in searches gets interpreted as a sustained trend. In a poorly architected system, this agent's over-ordering triggers cascading effects:

Warehouse allocation agents reallocate space based on expected inventory.

Pricing agents adjust margins to move products that will be massively overstocked.

Marketing agents launch campaigns for items that don't need promotion.

Organizations deploy agents without understanding how they communicate and fail to implement proper isolation and recovery mechanisms.

Network architecture requirements for agentic AI

Cisco published research arguing that "the agentic AI era demands a new network." Their analysis focuses on physical infrastructure—ultra-low latency networks that facilitate agent-to-agent communication at machine speed rather than human pace. Agents must exchange information in milliseconds, not seconds.

The insight is on target, but incomplete. Organizations can have millisecond-level network latency and still experience catastrophic network collapse. The missing piece is software architecture—how agents are designed and how they communicate internally.

The alternative to vulnerable single-thread agents is a multi-conversation architecture where each agent maintains multiple independent conversation contexts simultaneously. Instead of one agent equaling one conversation thread, one agent can host dozens or hundreds of concurrent conversations in isolation of each other.

The isolation benefit is crucial

When a multi-conversation agent experiences context window exhaustion in one thread, that thread degrades—but the agent itself remains functional. Other conversations continue operating normally. The degradation is isolated rather than systemic. This is fault tolerance at the architectural level.

Multi-conversation architecture creates resilience. Each agent effectively becomes "infinitely scalable"—capable of taking on multiple personas or handling multiple work streams without the risk that one failure contaminates everything else.

Here's the counter-intuitive insight about ultra-low latency networks: They're important, but not for the reasons most people assume. The primary benefit isn't making agents faster—it's making recovery faster.

When an agent or conversation thread degrades, rapid detection and isolation prevent the degradation from spreading. Speed enables resilience, not just performance.

Why everyone is building it wrong

Claude's "computer use" capability launched to enormous fanfare. OpenClaw has drawn intense scrutiny.

They are relatively "mature"—in the fast-moving AI space—but maturity doesn't equal correctness. The flood of network collapse issues suggests a design flaw with unavoidable consequences.

The main solution being explored is to add external pressure on agents. Developers are forcing agents to write things down, maintain explicit state, and follow rigid workflows. The thinking is that if agents are more disciplined, they'll avoid degradation.

This fails because external constraints reduce agent capabilities. Every explicit instruction about "how" to work is cognitive overhead that reduces capacity for thinking about "what" to accomplish.

When we make the constraints invisible to the agent, we find that they become more effective and more creative. Agents perform better when constraints are architectural (built into the environment) rather than instructional (part of the prompt).

The invisible constraint principle

Multi-conversation architecture is an invisible constraint. The agent doesn't need instructions about managing multiple contexts because the harness handles it automatically. The agent simply operates, and the architecture prevents systemic collapse.

At Rellify, our use of multi-conversation architectures means that agents naturally leverage the network without being instructed to do so. Because all of these conversations are happening within the same context of the agent, it is trivial for the agent to pick up a new thread if another thread fails. All of the context from the previous conversation has been captured.

Agents spontaneously delegate tasks to other agents, check results, and coordinate work. The behavior emerges from the architecture rather than requiring explicit prompting. This is network topology working in your favor rather than against you.

The contrarian insight on network collapse

Sometimes the entire industry converges on an approach not because it's optimal, but because it's familiar.

Single-thread conversational agents feel natural because they mirror how humans use chatbots. But what's intuitive for human-computer interaction isn't necessarily right for computer-computer interaction in autonomous systems.

And the question isn't whether they'll fail—it's whether the failure mode is containable or catastrophic.

Building collapse-resistant agent networks

Synthesizing everything we've learned, here are the architectural principles for building systems that can withstand network collapse:

1. Multi-conversation agents over single-thread agents

Design agents that maintain multiple independent conversation contexts. This isn't just a technical detail—it's the foundation of resilience. When one context degrades, the agent continues functioning. The degradation is localized rather than systemic.

This requires a fundamentally different agent harness than most frameworks provide. It's not a configuration option in existing systems—it's a different architectural paradigm. But the investment pays immediate dividends in reliability and fault tolerance.

2. Controlled inter-network communication

Establish hard boundaries between organizational networks. Within Company A's network, agents communicate freely. Between Company A and Company B, communication requires explicit bridges with monitoring and controls. This prevents cascade failures from jumping between organizations.

Think of it as business continuity planning at the architectural level. Your agent network can experience issues without becoming a single point of failure for partners or customers.

3. Code execution over computer use

This principle seems counter-intuitive, given industry excitement around computer use capabilities. However, early evidence suggests that you can improve creative problem-solving by using constraints like restricting agents to code execution—rather than controlling arbitrary desktop applications.

The constraint forces more thoughtful approaches and gets better results than brute-force trial-and-error. It's the difference between giving someone a precise tool versus letting them randomly click buttons. Constraints can liberate rather than limit.

4. Invisible constraints over explicit instructions

It's futile to instruct agents not to fail. Instead, design the environment so agents can't fail in catastrophic ways. Architecture determines behavior more reliably than prompts. This is mitigation through design, not through hope.

5. Rapid detection and isolation

Assume degradation will happen and build systems that detect it quickly and isolate the affected components. This is analogous to circuit breakers in electrical systems—they don't prevent overload, but they prevent overload from causing fires.

In a business setting, collapse-resistant architecture means:

Each employee (user) can interact with multiple agents.

Each agent maintains separate contexts for different projects or conversations.

Agents can communicate with other agents in the same organization.

Cross-organization agent communication requires explicit approval.

When a conversation thread degrades, the user sees an error for that specific conversation, but their other work is unaffected.

This is disaster recovery built into the foundation rather than bolted on after failures occur. It's load balancing for cognitive workload across the agent network. Most importantly, it's prevention through architecture rather than intervention through monitoring alone.

Own your agent network, don't rent chaos

The phenomenon of network collapse presents a critical moment for agentic AI. The early adopters have discovered the problems. The early majority won't adopt until those problems are solved. The companies with solutions will own the market.

Architecture matters more than features. A less capable agent system that won't collapse is more valuable than a powerful system that might take down your entire operation. Ownership means control—over your data, your infrastructure, and your risk.

The central message from Rellify: Don't rent chat, own your agent network.

Before deploying agentic AI in your organization, ask your vendor one simple question: "How does your architecture prevent network collapse?"

Their answer will tell you whether they've thought deeply about resilience or are just riding the hype cycle. It will reveal whether they understand that network collapse isn't a temporary bug to be patched—it's a fundamental architectural challenge.

The companies and systems that understand this distinction will still be running when others have collapsed. Set up a free trial run today to see how Rellify attacks the network collapse problem.

Michael Rollins is a fractional CTO, engineering leader and day-to-day coder. He has deep experience in mobile and backend, and is currently thoroughly enjoying the rocket ship that is AI. You can reach him at michael@rollins.io, or on LinkedIn.